Inference in Machine Learning is the process of making predictions using a trained model. It applies learned information to new data.

Machine learning involves imparting knowledge to algorithms through data, enabling them to learn patterns and make decisions. The true test of these learned models comes during inference, where the model’s predictive power is unleashed on fresh, unseen data to provide actionable insights or decisions.

This final step in the machine learning pipeline is critical, as it measures how well a model generalizes beyond the data it was trained on. By leveraging computational power and sophisticated algorithms, machines can infer outcomes with remarkable accuracy, thus playing a pivotal role in areas ranging from healthcare diagnostics to financial forecasting. The effectiveness of inference directly correlates with the quality of the training data and the robustness of the model, ensuring that the predictions are both reliable and valuable in real-world applications.

Inference In Machine Learning: The Basics

When a machine learns, it’s like when a child goes to school. First, they learn. Then they use what they have learned. In the world of Machine Learning (AI), this ‘using’ part is called ‘Inference’. Let’s dive into the nuts and bolts of what this really means.

Defining Inference In Ai

Inference in AI is when a machine uses its brain, the model, to make smart guesses. It’s like taking a test after studying. The machine uses things it has seen before to make decisions about new things.

Contrasting Training And Inference Phases

Think of training and inference like baking. In training, you mix ingredients. This is when a machine learns. In inference, you eat the cake. This is when the machine shows what it has learned on new data. These are the two key parts that make machines smart.

| Training Phase | Inference Phase |

|---|---|

| Mixing ingredients (learning) | Eating cake (using) |

| Needs lots of examples | Needs new data to work on |

| Takes more time and energy | Faster and uses less energy |

First, during training, a computer sees many examples. It tries to notice patterns. Next, in inference, the computer uses these patterns. It makes smart guesses about new stuff it sees. It’s a step-by-step journey from learning to doing.

Key Components Of Inference

Imagine teaching a friend to play chess. You explain the rules and show some moves. Now, they start making their moves, thinking on their feet. This is like machine learning inference. In a machine’s world, inference means using learned knowledge to make decisions. Here’s a breakdown of what makes it tick.

Models And Algorithms

Think of models as finished puzzles. An algorithm is like puzzle instructions. Together, they power a machine’s brain. The better the model, the smarter the choices. Here are the key parts:

- Machine learning model: A result of training, ready to use.

- Algorithm: Step-by-step recipe to create models.

- Weights: Valuable pieces of info that decide a model’s action.

Data And Input Variables

Inference can’t happen in a vacuum. It needs data – the lifeblood of any model. Here’s how it looks:

- Input variables: These are like questions you ask the model.

- Feature data: Like clues, they help the model answer questions.

- Data can be anything – numbers from a sensor, pictures, or words.

Inference Engines

Now we reach the engine room. The inference engine is where models come alive. It takes data, consults the model, and delivers answers. Let’s see its parts:

- A software component using the model to make decisions.

- It might run on a computer, a server, or even your phone!

- Speed and accuracy are its best friends.

Types Of Inference Techniques

Exploring the realm of Machine Learning (ML), we uncover the pivotal role of inference techniques. Inference is the method by which ML algorithms draw conclusions from data patterns.

Understanding different types of inference techniques is crucial. It’s a cornerstone for ML applications. Let’s delve into three primary techniques. Each plays a unique role in how algorithms interpret data and learn from it.

Deductive Reasoning

Deductive reasoning is a logical process. It starts with a general statement and reaches a specific conclusion. It’s like a top-down approach in problem-solving.

Applying deductive reasoning in ML involves creating models. These models operate on strict rules and defined principles. They ensure that the conclusions are a direct result of the initial premises.

Inductive Reasoning

Inductive reasoning contrasts with deduction. It starts with observations and builds up to generalizations.

In ML, inductive reasoning steps from specific instances. It constructs broad models. These models predict and make decisions on new, unseen data.

Abductive Reasoning

Abductive reasoning is about forming plausible explanations.

ML models use abductive reasoning to infer the most likely cause behind the data patterns. It’s a best-guess scenario approach.

Each technique contributes to the robustness of ML models. They equip machines to reason and learn, supporting intelligent decision-making across various domains.

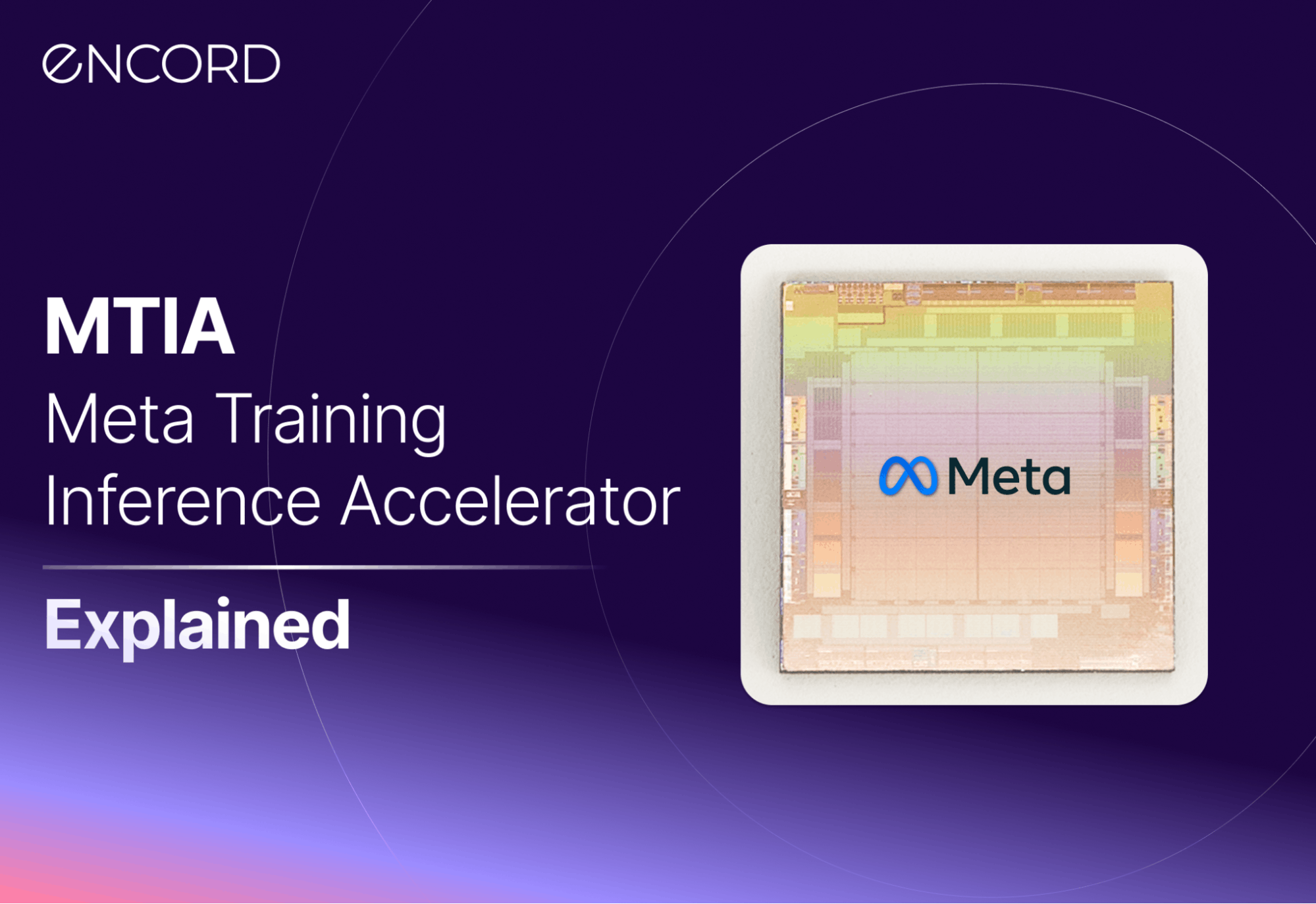

Credit: encord.com

Applications Of Inference In Various Industries

The world of machine learning is vast, with its tendrils reaching into virtually every industry. In particular, the process of inference in machine learning has opened the door to a plethora of applications. By using inference, machines can analyze data to make predictions, spot trends, and enhance decision-making. Let’s explore how various industries benefit from this advanced technology.

Healthcare Diagnostics

In the critical field of healthcare, machine learning inference is a game-changer. Early and accurate illness detection can save lives. Here’s how it works:

- Data Analysis: Machines process vast amounts of patient data.

- Pattern Recognition: They identify patterns corresponding to diseases.

- Diagnosis Speed: Quicker diagnoses lead to faster treatments.

Thus, with the support of inference, healthcare providers offer smarter, personalized care.

Fraud Detection In Finance

In the dynamic finance sector, forgery and illicit activities are notable concerns. Machine learning inference combats these threats by:

- Analyzing Transactions: Scrutinizing each one for irregularities.

- Spotting Anomalies: Highlighting unusual patterns in real-time.

- Minimizing Losses: Preventing fraud before it impacts finances.

Financial institutions thus rely on machine learning to maintain the integrity and customer trust.

Predictive Maintenance In Manufacturing

Manufacturing thrives on efficiency and uptime. Machine learning inference steps in to ensure optimal machine performance. It achieves this by:

- Monitoring Equipment: Constantly checking for signs of wear.

- Anticipating Failures: Predicting when machines might break down.

- Planning Maintenance: Scheduling repairs before issues arise.

Manufacturers, therefore, minimize downtime and keep production lines moving smoothly with inference.

Challenges And Considerations

Inference in Machine Learning is crucial for models to make predictions on new data. But it brings its own set of challenges and considerations. These hurdles are essential to overcome to ensure the reliability and efficiency of Machine Learning applications.

Balancing Speed And Accuracy

Making accurate predictions quickly is a balancing act. Machine Learning models must be both fast and precise. High accuracy can sometimes mean slower inference, especially with complex models. On the other hand, fast predictions may lead to a drop in accuracy. It is important to find a middle ground that serves the end-use case effectively.

Dealing With Uncertainty And Ambiguity

Predictions are not always black and white. Models often deal with uncertain or incomplete information. The challenge is to achieve a level of certainty that enables confident decision-making. Probabilistic models and techniques like Bayesian inference can help. They weigh the likelihood of different outcomes to make informed predictions.

The Role Of Data Quality

- Data cleanliness is paramount.

- Inaccurate or biased data can skew results.

- Data preparation and cleaning are time-consuming but necessary.

- Quality data ensures models are trained effectively for accurate inference.

Without quality data, a model’s predictions are unreliable. Ensuring clean, relevant, and well-structured data is a key part of successful Machine Learning projects.

Credit: www.linkedin.com

The Future Of Inference In Ai

Peering into the crystal ball, the future of inference in AI holds promise as well as challenges. It paves the way for machines that think like us, understand the world, and make decisions autonomously.

Advancements In Neural Networks

Neural networks mimic the human brain’s connections. They’re crucial for AI’s ability to learn.

Recent strides in this area are jaw-dropping. We see neural networks growing in size and smarts.

- Speed – Faster processing means quicker learning.

- Efficiency – AI now uses less power, similar to our brains.

- Complex tasks – AI can handle jobs that once stumped them.

Incorporating Human-like Reasoning

AI brains are getting an upgrade. They’re learning to think like we do – and that’s a big deal.

- Logic and causality – These new AI systems understand cause and effect.

- Common sense – No more silly mistakes. AI is getting streetwise.

- Decision-making – AI choices are becoming more reliable and human.

Ethical Implications And Accountability

With great power comes great responsibility, and AI is no exception.

As AI grows, so does the need for rules to keep things fair and safe.

| Aspect | Importance |

|---|---|

| Transparency | Knowing how AI makes decisions is key. |

| Privacy | Personal data must stay private, even from AI. |

| Accountability | When AI errs, we must know who to hold responsible. |

Credit: www.newelectronics.co.uk

Frequently Asked Questions For What Is Inference In Machine Learning

What Does Inference Mean In Ml?

Inference in machine learning refers to the process of making predictions using a trained model. Given new input data, the model uses its learned parameters to produce outputs, such as classifications or estimated values.

Why Is Inference Important In Ml?

Inference is crucial because it’s the practical use of a trained model. It allows for decision-making in real-world applications, from recognizing speech to recommending products or diagnosing diseases.

How Does Inference Differ From Training?

Training involves learning from a dataset to create a model, while inference is applying the model to new data. Training is a one-time process, whereas inference is repeated with every new piece of data.

Can Inference Be Real-time In Ml?

Yes, inference can be real-time. This means the model processes input data and provides outputs immediately, which is vital for applications requiring instant decisions like autonomous vehicles or fraud detection.

Conclusion

Understanding inference in machine learning is the key to unlocking the full potential of AI models. It bridges the gap between data and real-world application, turning learned information into actionable insights. As we leverage its power, the horizon for technology’s capabilities broadens, promising exciting developments in the field of artificial intelligence.